Text-to-image generation has rapidly moved from experimental research to real-world production systems powering design tools, marketing platforms, gaming assets, and creative automation. Stable Diffusion stands at the center of this shift as a powerful, open source latent diffusion model that developers can fully control and extend. This blog explores how to build end-to-end Stable Diffusion pipelines using Python, focusing on practical implementation rather than abstract theory. We dive into how text prompts are transformed into high-quality images, how diffusion models operate under the hood, and how Python frameworks make the entire workflow accessible and scalable. With growing demand for custom visual generation, on-device inference, and cost-efficient AI creativity, understanding Stable Diffusion pipelines is quickly becoming a must-have skill for modern AI engineers and product teams.

Deep Dive into the Topic

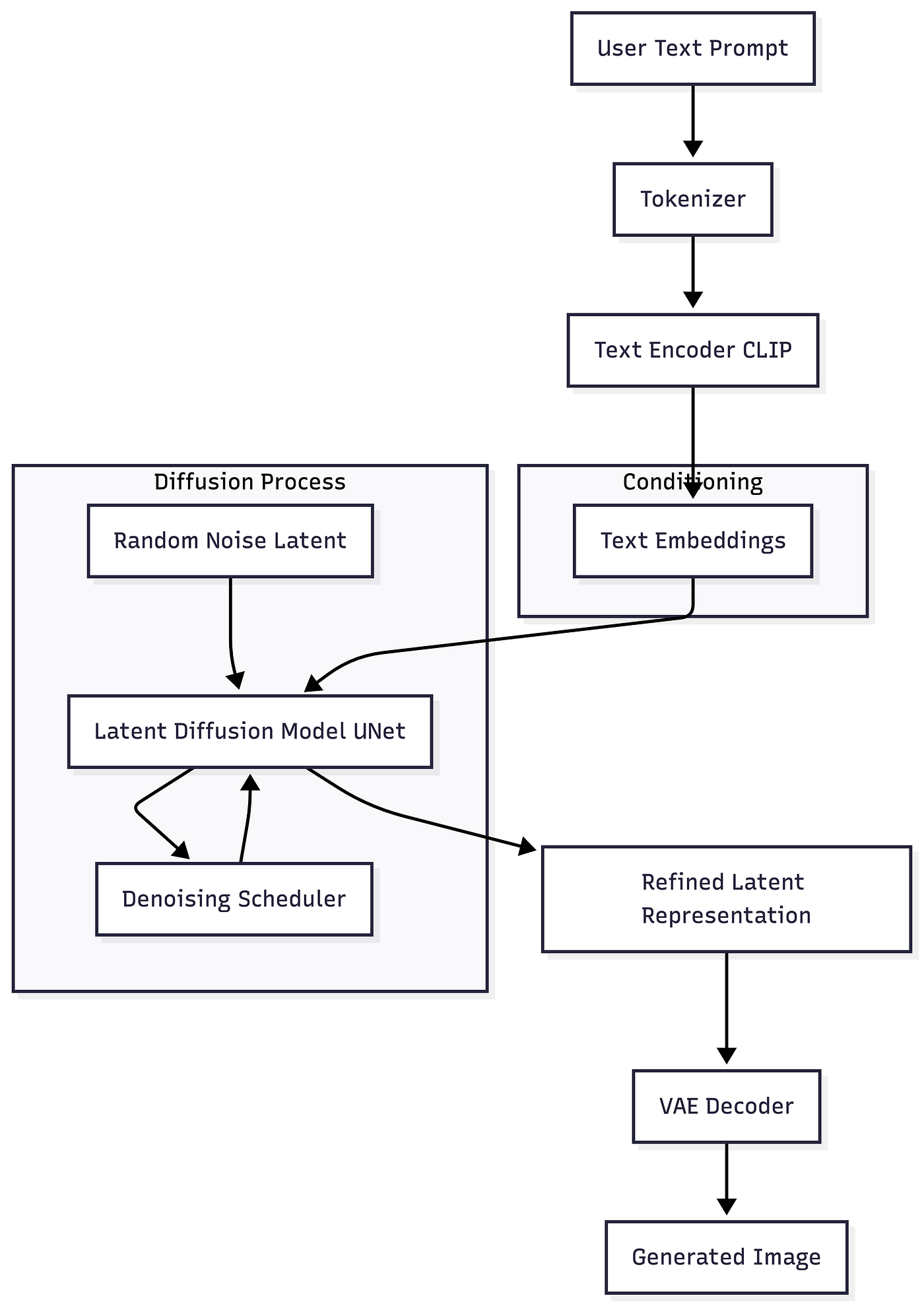

Stable Diffusion is a latent text-to-image generation model that converts natural language prompts into images by iteratively denoising random noise in a compressed latent space. Unlike earlier diffusion models that operated directly in pixel space, Stable Diffusion performs diffusion in a lower-dimensional latent representation. This makes it dramatically faster and more memory efficient.

At a high level, the pipeline has three core components. A text encoder converts the prompt into embeddings. A latent diffusion model progressively refines noise into structured visual representations conditioned on those embeddings. A decoder then converts the latent representation back into pixel space to form the final image.

Python has become the dominant language for building Stable Diffusion pipelines thanks to libraries like Hugging Face Diffusers, PyTorch, and Accelerate. These tools allow developers to load pretrained checkpoints, customize schedulers, fine-tune models, and deploy inference pipelines with minimal boilerplate.

Press enter or click to view image in full size

This architecture is highly modular. Developers can swap schedulers, replace text encoders, apply LoRA fine-tuning, or add safety and validation layers. In real-world systems, Stable Diffusion is often combined with orchestration frameworks, vector databases for prompt retrieval, and monitoring layers to track output quality.

Code Sample

Pros of Stable Diffusion

- Open source flexibility allows full control over model behavior and deployment

- Efficient latent space diffusion enabling faster inference and lower compute cost

- Strong community support with continuous improvements and pretrained checkpoints

- Highly customizable via LoRA, DreamBooth, and textual inversion

- Easy Python integration for rapid prototyping and production pipelines

Industries Using Stable Diffusion

Creative design, marketing, ecommerce, gaming, automotive visualization, and media production are among the fastest adopters of Stable Diffusion-based systems.

Industry Applications

Healthcare

Used for generating synthetic medical imagery to augment training datasets while preserving patient privacy.

Finance

Applied in marketing content automation and visual storytelling for financial products and campaigns.

Retail

Powers AI-generated product visuals, lifestyle imagery, and virtual try-on concepts at scale.

Automotive

Used to visualize concept cars, interior designs, and environment simulations before physical prototyping.

Legal

Supports illustrative content generation for presentations, reconstructions, and educational materials without manual design effort.

How Nivalabs AI can assist in this

- Nivalabs AI brings deep expertise in building end-to-end Stable Diffusion pipelines using Python and PyTorch

- Nivalabs AI specializes in customizing diffusion models with LoRA and domain-specific fine-tuning

- Nivalabs AI designs scalable inference systems optimized for cloud and on-premises deployments

- Nivalabs AI integrates Stable Diffusion with existing APIs, data platforms, and business workflows

- Nivalabs AI ensures security, compliance, and content moderation in generative AI systems

- Nivalabs AI delivers production-grade MLOps, including monitoring, logging, and model versioning

- Nivalabs AI helps optimize cost and performance through quantization and scheduler tuning

- Nivalabs AI builds intuitive frontends and internal tools powered by diffusion models

- Nivalabs AI supports enterprise adoption with architecture reviews and best practices

- Nivalabs AI partners with teams from ideation to full-scale generative AI deployment

References

- https://huggingface.co/docs/diffusers/index

- https://github.com/CompVis/stable-diffusion

- https://arxiv.org/abs/2112.10752

Conclusion

Stable Diffusion has fundamentally changed how developers approach image generation by making high-quality text-to-image models accessible, customizable, and production-ready. In this blog, we explored the core architecture, walked through a complete Python pipeline, and examined real-world applications across industries. For developers and decision makers alike, Stable Diffusion offers a rare combination of creative power and engineering control. The next frontier lies in deeper personalization, multimodal pipelines, and tighter integration with business systems. Now is the ideal time to experiment, build, and scale with Stable Diffusion as a foundational component of modern AI-driven products.