Large Language Models have evolved far beyond simple text generators. Modern LLMs can reason, retrieve information, call APIs, interact with databases, and orchestrate complex workflows through structured tool usage. This capability is known as LLM function calling and it is rapidly becoming the backbone of intelligent AI assistants.

Instead of answering questions purely from model memory, LLMs can now invoke external tools such as calculators, search engines, knowledge bases, and enterprise APIs. This dramatically improves accuracy, reliability, and real world usefulness. Developers are increasingly using Python frameworks to build agent systems that combine LLM reasoning with external actions.

In this article we explore how LLM function calling works, how to implement tool based AI assistants in Python, and how modern frameworks enable scalable agent architectures for real production systems.

Understanding LLM Function Calling

Traditional LLM applications follow a simple pipeline.

- User prompt enters the model

- Model generates text response

- The response is returned to the user

While useful, this architecture has limitations. The model cannot access live data, cannot execute calculations reliably, and cannot interact with external systems.

Function calling changes this paradigm.

The model decides when to call external tools instead of directly generating an answer. These tools may include APIs, databases, search systems, or internal enterprise services.

For example an AI assistant can:

- Call a weather API

- Query a database

- Execute a Python calculation

- Retrieve knowledge from a vector store

- Trigger an enterprise workflow

The model analyzes the user request and determines which tool should be used.

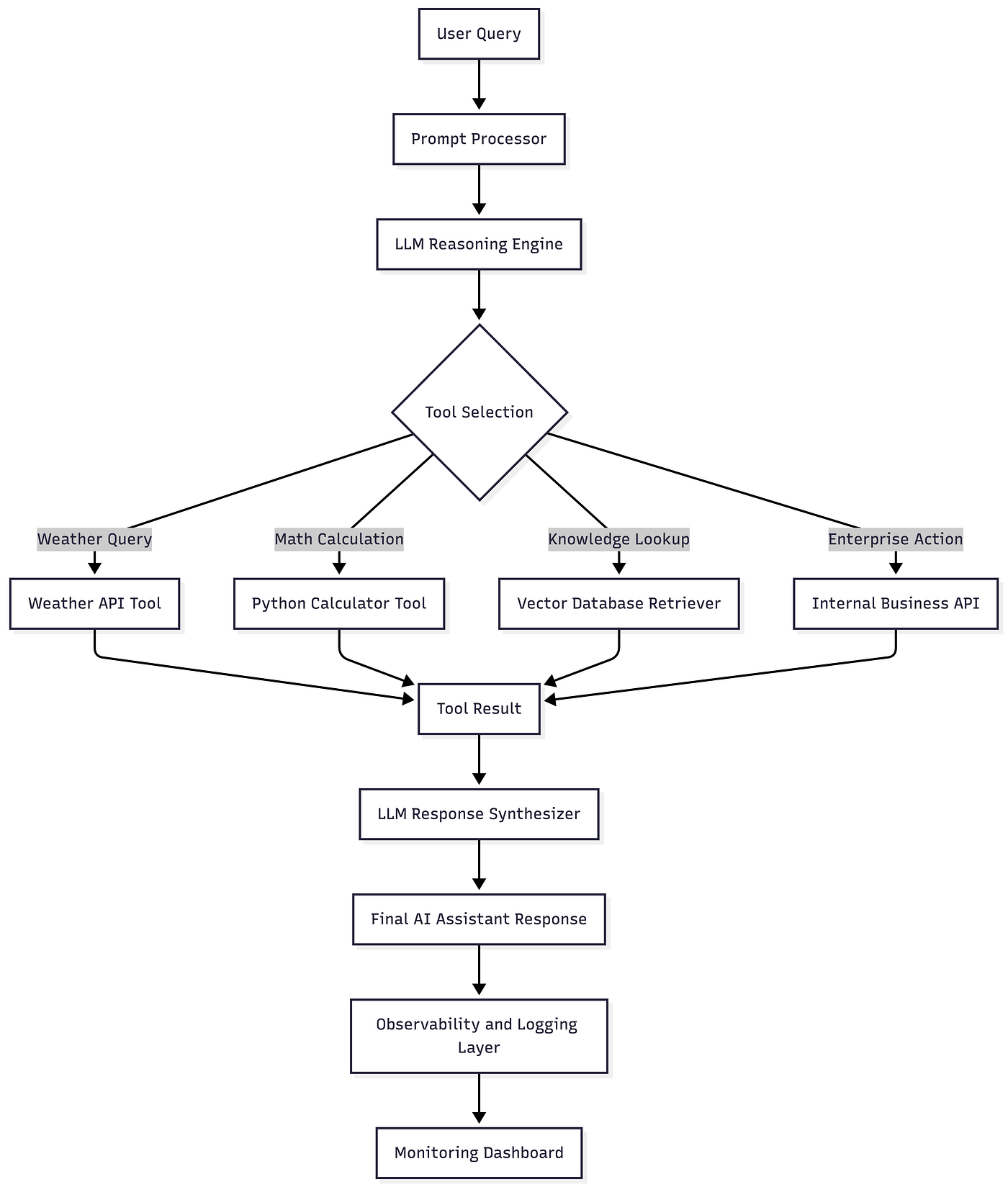

Architecture of LLM Tool Calling Systems

A typical AI assistant architecture includes several layers.

- User Interface

- Prompt Processing Layer

- LLM Reasoning Engine

- Tool Registry

- Tool Execution Engine

- Response Synthesizer

The LLM acts as the reasoning brain that decides when a tool should be invoked.

Press enter or click to view image in full size

Code Example

Install Dependencies

Python Implementation

Pros of LLM Function Calling

• Improved accuracy

External tools allow LLMs to fetch real-time data and avoid hallucinations.

• Better scalability

AI assistants can interact with multiple APIs and services across enterprise systems.

• Enhanced reliability

Critical operations such as calculations and database queries are handled by deterministic tools.

• Security and compliance

Function calling allows developers to restrict what actions an AI assistant can perform.

• Strong developer ecosystem

Frameworks like LangChain provide extensive tooling for agent orchestration.

Industries Using the LLM Tool Calling

Healthcare

Medical AI assistants can retrieve drug interactions from medical databases instead of relying on model memory.

Example: Clinical research assistants retrieving medical literature.

Finance

Financial assistants can query stock APIs and perform financial calculations.

Example: AI investment advisors analyzing market data.

Retail

Retail AI agents can check product inventory and pricing through backend systems.

Example: AI shopping assistants retrieving product availability.

Automotive

Automotive copilots can interact with vehicle diagnostic systems.

Example: AI assistants retrieving engine health data.

Legal

Legal AI tools can search legal databases and case law repositories.

Example: Contract analysis assistants retrieving regulatory references.

How Nivalabs AI Can Assist in This

Organizations looking to deploy intelligent AI assistants require deep expertise in LLM engineering and scalable infrastructure.

• Nivalabs AI specializes in building production-grade AI assistants powered by LLM tool orchestration.

• Nivalabs AI designs scalable architectures that integrate APIs, databases, and enterprise tools into AI systems.

• Nivalabs AI builds secure AI agents that execute business workflows safely and reliably.

• Nivalabs AI develops advanced Python-based frameworks for tool-enabled LLM applications.

• Nivalabs AI helps organizations integrate knowledge graphs and retrieval pipelines into AI assistants.

• Nivalabs AI provides expertise in deploying AI assistants with monitoring, observability, and governance.

• Nivalabs AI builds custom AI copilots for enterprise automation and decision support systems.

• Nivalabs AI ensures production readiness through scalable infrastructure and optimized API integrations.

• Nivalabs AI helps organizations transform traditional software into intelligent AI driven platforms.

• Nivalabs AI empowers businesses to deploy intelligent AI assistants that operate reliably at scale.

References

OpenAI Function Calling Documentation

https://platform.openai.com/docs/guides/function-calling

LangChain Agents Documentation

https://python.langchain.com/docs/modules/agents/

Conclusion

LLM function calling represents one of the most important advances in modern AI application development. By allowing models to interact with external tools, APIs, and enterprise systems, developers can build intelligent assistants that perform real actions rather than simply generating text.

In this article, we explored the concept of tool-enabled LLM architectures, examined the underlying system design, and implemented a working Python example demonstrating function calling.

For developers and organizations building AI products, this capability unlocks an entirely new class of intelligent applications. From enterprise automation agents to domain-specific copilots, the ability to combine reasoning with tool execution is transforming how software systems operate.

The next generation of AI systems will not just answer questions. They will reason, act, retrieve knowledge, and orchestrate complex workflows. Teams that master LLM tool usage today will define the intelligent platforms of tomorrow.