Large Language Models and autonomous AI agents are rapidly becoming core components of modern software systems. From AI copilots and automated customer support to intelligent research assistants, organizations are deploying LLM powered systems in production environments at an unprecedented pace. However, once these systems go live, a major challenge emerges. How do you monitor, debug, and evaluate AI behavior in real time?

This is where AI observability becomes essential. AI observability provides visibility into prompts, model outputs, reasoning chains, latency, token usage, and hallucination risks. It enables engineering teams to build reliable and trustworthy AI systems that can be safely deployed at scale. In this article we explore how to implement AI observability using Python. We discuss architecture patterns, monitoring frameworks, and practical techniques for tracking LLM pipelines and agent workflows in production environments.

What is AI Observability

AI observability refers to the practice of monitoring, tracing, and evaluating AI systems operating in production environments. Traditional software observability relies on logs, metrics, and traces. AI systems introduce additional complexity because their outputs are probabilistic rather than deterministic.

For example an LLM based agent might produce different answers for the same prompt depending on context, temperature settings, or retrieved knowledge. Without proper monitoring, teams cannot understand why failures occur or how to fix them.

AI observability introduces specialized monitoring signals such as:

- Prompt inputs

- Model outputs

- Token usage

- Latency metrics

- Intermediate reasoning steps

- Tool calls executed by agents

- Hallucination detection metrics

- Response evaluation scores

These signals provide transparency into how AI systems behave in real-world scenarios.

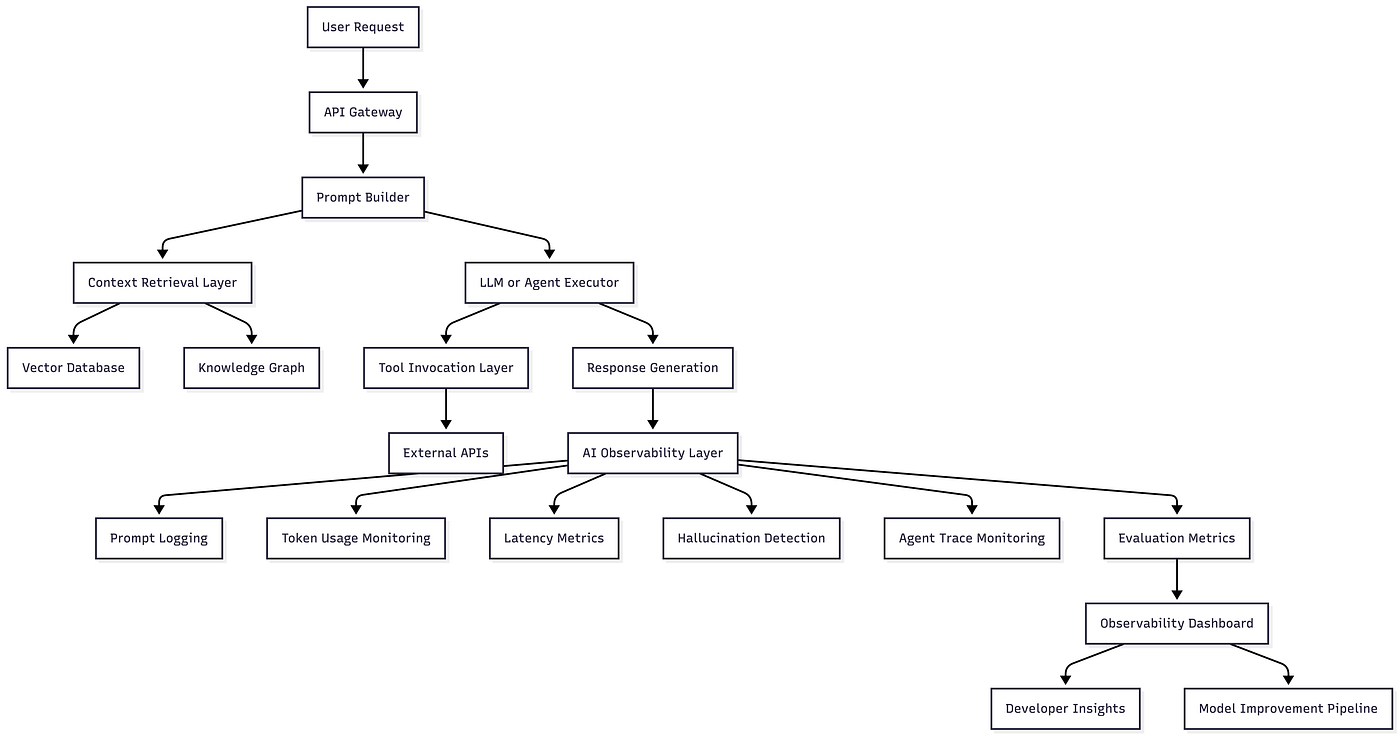

AI Observability Architecture

A production-grade LLM observability pipeline typically contains the following layers.

Input collection layer

Captures prompts, metadata, and user queries.

Execution layer

Runs LLM pipelines, RAG workflows, or AI agents.

Monitoring layer

Tracks metrics such as latency, token usage, response quality, and reasoning steps.

Evaluation layer

Measures hallucination risk, factual accuracy, and relevance.

Visualization layer

Displays metrics through dashboards and analytics tools.

Press enter or click to view image in full size

Detailed Code Sample

Install Dependencies

Python Implementation

Pros of AI Observability

Improved Reliability

Observability allows teams to detect hallucinations and model failures before users experience them.

Debuggable AI Systems

Tracing prompt pipelines makes debugging complex agent systems significantly easier.

Cost Optimization

Monitoring token usage helps companies control LLM API costs.

Security and Prompt Injection Detection

Observability platforms can flag suspicious prompts and malicious instructions.

Compliance and Governance

Audit logs allow organizations to maintain transparency and regulatory compliance.

Scalability

Observability infrastructure ensures that AI systems continue to perform reliably as traffic increases.

Industries Using AI Observability

Healthcare

Healthcare AI systems assist doctors with diagnosis and treatment recommendations. Observability ensures that hallucinated medical advice is detected before reaching clinicians.

Example: Monitoring AI clinical assistants used for patient triage.

Finance

Financial institutions deploy LLMs for fraud analysis, financial research, and risk modeling.

Observability helps detect incorrect financial reasoning that could affect trading decisions.

Example: Monitoring AI investment research assistants.

Retail

Retail companies deploy AI agents for product search, recommendation engines, and automated customer support.

Observability ensures product recommendations remain relevant and accurate.

Example: Monitoring conversational shopping assistants.

Automotive

Automotive companies use AI for autonomous driving simulations and customer vehicle assistants.

Observability ensures safe decision-making and reliable responses.

Example: Monitoring vehicle diagnostic AI copilots.

Legal

Legal AI tools analyze contracts, generate legal summaries, and assist attorneys with research.

Observability ensures that the generated legal advice remains grounded in real documents.

Example: Monitoring AI contract analysis tools.

How PySquad Can Assist in This

Organizations looking to deploy reliable AI observability systems often require deep expertise in LLM infrastructure, monitoring pipelines, and AI governance frameworks. This is where PySquad becomes a strategic technology partner.

• PySquad specializes in building production-ready AI observability systems that monitor LLM pipelines and agent-based architectures.

• PySquad designs scalable monitoring infrastructures that capture prompts, traces, and model performance metrics across distributed AI services.

• PySquad integrates modern observability frameworks such as LangChain tracing, OpenTelemetry, and real-time monitoring dashboards.

• PySquad helps enterprises detect hallucinations and improve trustworthy AI deployments using structured evaluation pipelines.

• PySquad builds custom AI monitoring platforms tailored to business workflows and regulatory requirements.

• PySquad provides deep expertise in Python-based AI infrastructure for LLM applications and agent orchestration frameworks.

• PySquad helps organizations optimize token usage and reduce operational costs through advanced observability analytics.

• PySquad delivers secure AI deployments with monitoring systems that detect prompt injection and abnormal model behavior.

• PySquad supports continuous improvement pipelines that use observability insights to refine prompts and models.

• PySquad enables enterprises to scale AI systems from prototypes to production-grade platforms confidently.

References

LangChain Documentation: https://python.langchain.com

OpenTelemetry Python: https://opentelemetry.io/docs/instrumentation/python/

Arize AI Observability Platform: https://arize.com

Weights and Biases LLM Monitoring: https://wandb.ai

LangSmith Observability Platform: https://smith.langchain.com

Conclusion

As LLMs and autonomous AI agents become core components of modern software systems, monitoring them effectively becomes non-negotiable. AI observability introduces the visibility required to understand model behavior, detect hallucinations, monitor costs, and debug complex reasoning pipelines.

In this guide, we explored how observability works in LLM systems, examined the architecture behind monitoring pipelines, and built a Python example that tracks latency and hallucination metrics.

For developers building agent-based systems, observability is not simply a debugging tool. It is a foundation for building trustworthy AI systems that organizations can rely on in production.

The future of AI engineering will revolve around hybrid AI systems that combine neural networks, symbolic reasoning, and structured validation layers. Observability will play a central role in ensuring these systems remain transparent, reliable, and aligned with human expectations.

Teams that invest in AI observability today will be the ones capable of scaling intelligent systems tomorrow.